Class-Adaptive Layer Fusion

CLASP fuses multi-layer vision features according to the instruction category instead of relying on a fixed single-layer token representation.

Project Page

arXiv 2026

CLASP fuses multi-layer vision features according to the instruction category instead of relying on a fixed single-layer token representation.

The pruning budget is split between relevance-preserving pivot tokens and coverage-preserving completion tokens for more robust compression.

Under very aggressive compression, CLASP still preserves 94.7% of the original performance and remains strong across multiple MLLM backbones.

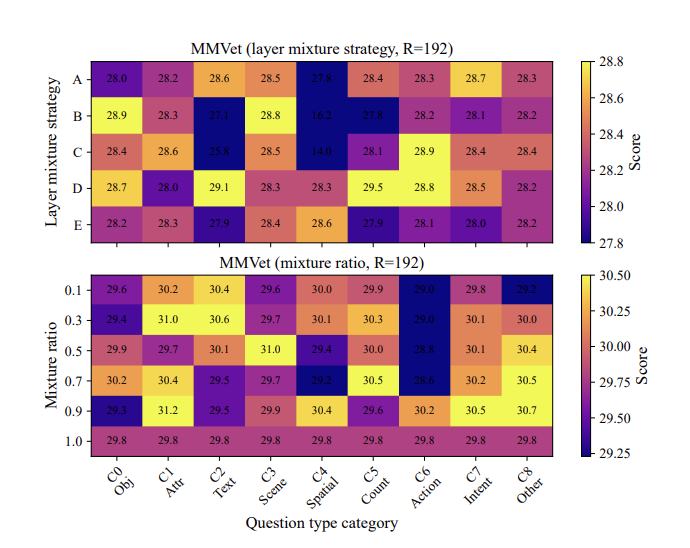

CLASP studies one of the most persistent efficiency problems in multimodal large language models: visual token redundancy. Existing pruning methods often rely on a single vision layer and a fixed pruning rule, which makes them brittle when the prompt changes or when the task requires a different balance between local detail and global coverage. CLASP addresses this by making both the feature construction stage and the pruning stage adaptive to the semantic class of the instruction.

The core argument of the paper is simple but important: if different prompt types need different visual evidence, then fixed token reduction strategies will inevitably waste budget on the wrong patches or over-prune critical details. CLASP turns token reduction into a prompt-conditioned decision process instead of a one-size-fits-all heuristic.

The framework first fuses multiple vision encoder layers to construct category-aware visual representations instead of depending on a single-layer feature map. It then performs dual-stage pruning. In the first stage, attention-salient pivot tokens preserve relevance to the instruction. In the second stage, redundancy-aware completion tokens maintain coverage over the scene. This design aims to preserve both “what matters most” and “what would otherwise be lost.”

CLASP combines class-adaptive feature fusion with dual-stage pruning to reduce visual tokens without collapsing task robustness.

Visual Token Redundancy: CLASP targets the heavy computational overhead caused by long visual token sequences in multimodal large language models.

Class-Adaptive Layer Fusion: the framework builds category-specific visual representations instead of relying on a fixed single-layer feature map.

Dual-Stage Pruning: attention-salient pivot tokens preserve relevance, while redundancy-aware completion tokens maintain coverage over the full scene.

Aggressive Compression: CLASP still preserves 94.7% normalized performance at 88.9% pruning.

Paper Resource: the full method and experiments are available on arXiv.

CLASP is useful because it frames efficiency as a conditional modeling problem rather than a hard-coded compression rule. That is a better fit for real multimodal systems, where the visual evidence needed for OCR, counting, grounding, or open-ended reasoning can be very different.

The practical implication is that token pruning does not have to be a crude tradeoff between speed and quality. With prompt-conditioned fusion and budget allocation, a model can cut most of the visual sequence while still preserving the evidence required for robust inference.

@article{dang2026clasp,

title={CLASP: Class-Adaptive Layer Fusion and Dual-Stage Pruning for Multimodal Large Language Models},

author={Dang, Yunkai and Jiang, Yizhu and Jiang, Yifan and Fan, Qi and Shi, Yinghuan and Li, Wenbin and Gao, Yang},

journal={arXiv preprint arXiv:2604.12767},

year={2026}

}