Multi-Feature Fusion for RS Scenes

The model combines global context with fine-grained local features so that small structures and complex scene layouts are preserved.

Project Page

arXiv 2025

The model combines global context with fine-grained local features so that small structures and complex scene layouts are preserved.

Visual evidence is injected back into the language model during generation to reduce visual forgetting in long reasoning chains.

FUSE-RSVLM reports 65.76% VQA accuracy, 74.51% average classification accuracy, and state-of-the-art captioning results on multiple RS benchmarks.

FUSE-RSVLM is motivated by a recurring mismatch between generic vision-language models and remote sensing imagery. Earth observation scenes differ from natural images in scale, spatial layout, object density, and the importance of very small structures. As a result, models that work well on natural-image VQA or captioning often fail to preserve fine-grained evidence or gradually lose visual grounding during long language decoding.

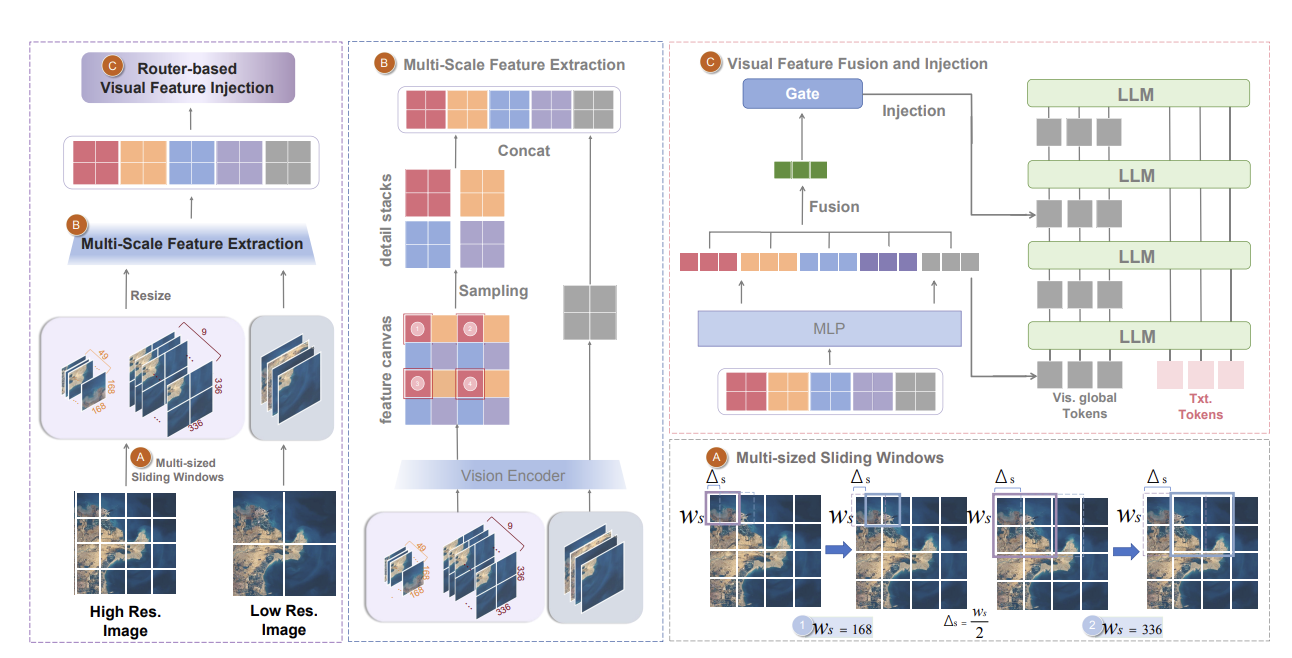

The paper addresses this by building a remote-sensing-oriented VLM that performs stronger multi-scale feature extraction and repeatedly re-injects visual evidence into the language model. The goal is not only better static image encoding, but also less visual forgetting during generation.

The model learns and fuses complementary visual representations at multiple scales, combining global scene context with localized detail features. This is especially important in remote sensing, where a large scene overview is necessary but tiny local structures often determine the answer. On top of that, FUSE-RSVLM uses recurrent visual feature injection so that visual evidence is not consumed only once at the beginning of decoding, but can keep influencing later reasoning steps.

FUSE-RSVLM fuses multi-scale remote sensing features and repeatedly injects them into the language model to reduce visual forgetting.

Remote Sensing Mismatch: generic vision-language models often miss fine-grained geospatial evidence and gradually lose visual grounding during long decoding chains.

Multi-Feature Fusion: FUSE-RSVLM combines global scene context with localized detail features so that small but important structures remain visible to the model.

Recurrent Visual Injection: visual evidence is repeatedly fed back into the language model to reduce visual forgetting during captioning, VQA, and classification.

Strong Results: the model reports 65.76% VQA accuracy and 74.51% average Top-1 accuracy across remote-sensing classification benchmarks.

Paper Resource: the full method and experiments are available on arXiv.

FUSE-RSVLM is not only another remote-sensing fine-tune. Its main value is that it explicitly treats visual grounding as a process that must be maintained through the whole reasoning chain. That is a better design for captioning, VQA, and classification in high-resolution geospatial imagery.

The experimental results also show that remote-sensing-specific modeling choices still matter even in the era of general VLMs. Multi-scale visual fusion and recurrent grounding remain strong advantages when the visual signal is dense, fine-grained, and spatially structured.

@article{dang2025fusersvlm,

title={FUSE-RSVLM: Feature Fusion Vision-Language Model for Remote Sensing},

author={Dang, Yunkai and Wang, Donghao and Yang, Jiacheng and Jiang, Yifan and Zhu, Meiyi and Yang, Yuekun and Wang, Cong and Fan, Qi and Li, Wenbin and Gao, Yang},

journal={arXiv preprint arXiv:2512.24022},

year={2025}

}