Multi-Level Correlation Modeling

MLCN reasons over self-correlation, cross-correlation, and pattern-correlation instead of relying on a single global similarity score.

Project Page

ICME 2023

MLCN reasons over self-correlation, cross-correlation, and pattern-correlation instead of relying on a single global similarity score.

The framework strengthens support-query matching with local structural cues that transfer better under scarce supervision.

The model reports 65.54 and 81.63 on miniImageNet 1-shot and 5-shot, with further gains on CUB and CIFAR-FS.

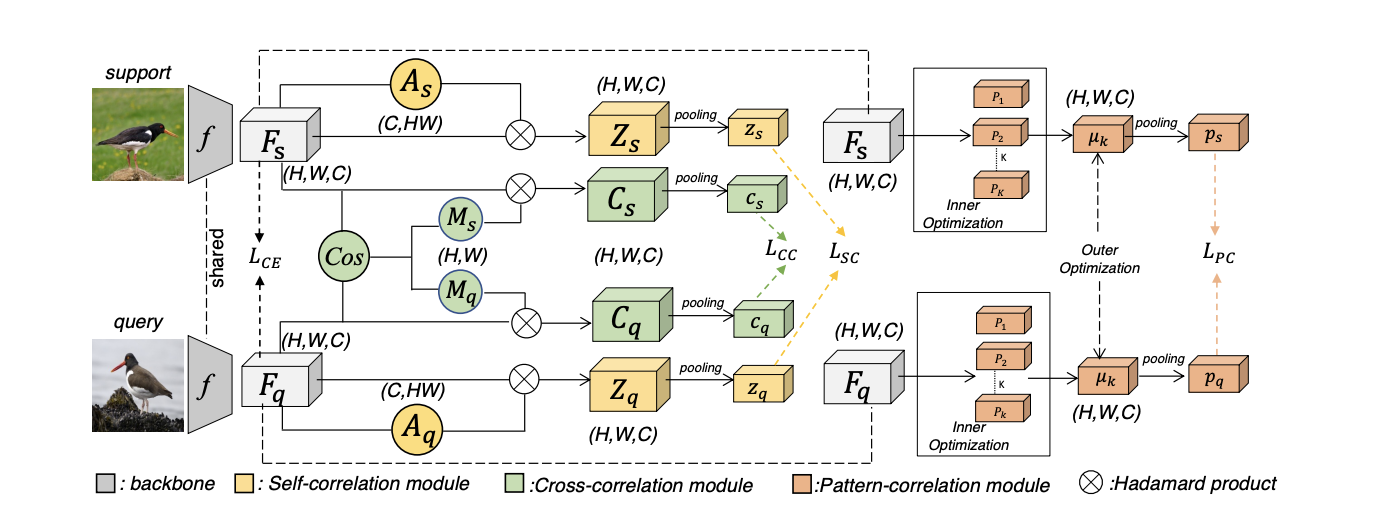

Multi-Level Correlation Network (MLCN) targets few-shot image classification, where the model must generalize to novel classes from only a handful of labeled examples. The paper argues that many metric-learning approaches compare support and query images at only one representation level, which is often too weak for fine-grained transfer. When training data is scarce, the model needs a richer notion of correspondence than one global feature similarity.

MLCN addresses this by explicitly modeling local semantic correspondence and structural pattern similarity across multiple levels of representation. The emphasis is not just on stronger feature extraction, but on better transferability from base classes to novel classes.

The model introduces three complementary components. The self-correlation module captures internal local structure. The cross-correlation module models correspondence between support and query images. The pattern-correlation module then focuses on recurring structural patterns that are useful in fine-grained recognition. Together, these modules produce a multi-level similarity signal that is more expressive than standard prototype matching.

MLCN compares images through multi-level local correspondences instead of relying only on one global similarity score.

MLCN is a useful reminder that few-shot learning is often limited by the quality of the similarity function rather than only the backbone. When supervision is scarce, local relational structure becomes a major source of transferable signal.

The broader lesson also remains relevant outside classic few-shot classification: multi-level correspondence is often more robust than single-scale matching whenever fine-grained recognition and data efficiency matter.

@inproceedings{dang2023mlcn,

title={Multi-level correlation network for few-shot image classification},

author={Dang, Yunkai and Zhang, Min and Chen, Zhengyu and Zhang, Xinliang and Wang, Zheng and Sun, Meijun and Wang, Donglin},

booktitle={IEEE International Conference on Multimedia and Expo (ICME)},

year={2023}

}